TL;DR for busy leaders

In 2025, ~77% of ReportPortal launches came from a small set of agents, showing clear consolidation in real-world test frameworks.

JavaScript frameworks dominate, with Playwright leading at 29% of all launches.

Java remains stable but is no longer the default choice for new test initiatives.

Python and .NET maintain consistent, niche usage in production test stacks.

Teams use this data to plan migrations, align skills, and justify tooling investments based on evidence – not assumptions.

In 2025, nearly 77% of all ReportPortal launches came from just a handful of agents. Here’s what teams actually use to send test results into ReportPortal – and what that says about real-world test automation today.

Choosing a test framework is not just a technical decision – it’s a long-term bet on tooling, maintenance cost, and visibility into quality.

To help teams make that decision with less guesswork, we analyzed which test frameworks actually generate reporting volume in ReportPortal, how that usage has changed over time, and what practical conclusions QA teams can draw from it.

This article is based on real ReportPortal launch data from 2021–2025, so you can benchmark your stack against what other teams are running in production today.

Why agents matter

In ReportPortal, each test framework connects via an agent – a maintained integration that sends results, logs, metadata, and structure into the platform.

High usage of an agent usually means:

The framework is actively used in real CI pipelines

The agent is stable enough for daily regression runs

There is community knowledge, examples, and fewer "edge case" surprises

In other words, agent usage is a practical proxy for ecosystem maturity and integration risk.

Visibility across test frameworks

Many large projects today use multiple test frameworks at the same time. UI tests, API tests, integration checks, and legacy suites often live in different stacks – each producing its own result format, logs, and reports.

Without centralized reporting, teams quickly end up:

Jumping between HTML reports, CI logs, and raw artifacts

Manually correlating failures across pipelines

Losing historical context once runs disappear from CI storage

ReportPortal solves this by collecting test results from different frameworks, languages, and pipelines into a single reporting tool.

That’s what makes framework distribution analysis possible in the first place – and why it reflects real production usage instead of isolated experiments.

Whether your UI tests run in Playwright, your API tests in Pytest, or your suites in JUnit, all results land in one place:

One dashboard

One data model

One historical view of quality

This is why reporting volume is not just a metric – it’s a signal of what teams actually trust to run in production.

How test framework usage has changed

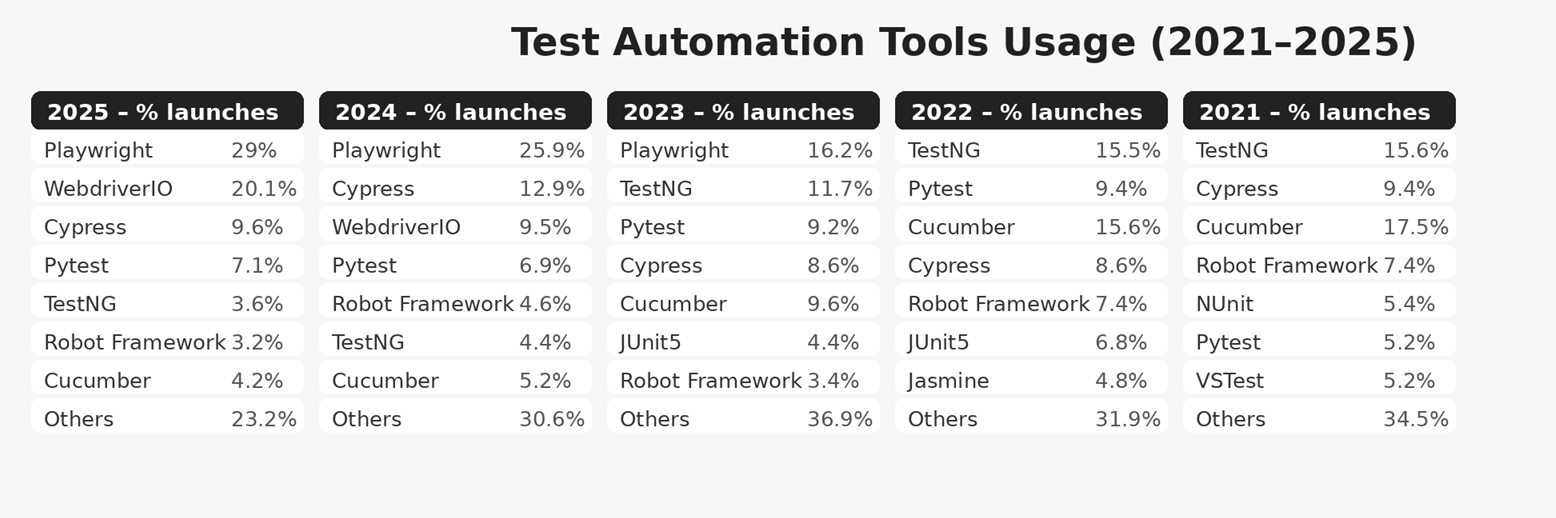

Based on % of ReportPortal launches

Looking at five years of launch data, three clear trends stand out:

JavaScript frameworks are now dominant

Java remains stable but no longer leading

Reporting volume is consolidating around fewer tools

The growth of JavaScript testing frameworks in ReportPortal also aligns with broader ecosystem signals – including rising npm download volumes – suggesting that these trends reflect real industry adoption.

The most notable shift is the rapid rise of Playwright, which moved from a secondary position in 2023 (16.2%) to the clear #1 framework in 2024–2025, reaching 29% of all launches in 2025.

Cypress, despite periodic "end-of-life" discussions in the community, continues to show stable usage across many existing automation suites.

At the same time, former leaders like TestNG and Cucumber steadily declined.

How testing ecosystems differ in practice

Behind the framework names are different testing cultures, infrastructure choices, and reporting needs.

Ecosystem | What the data shows | What this means | Benchmark check |

|---|---|---|---|

JavaScript | ~65–70% of ReportPortal launches by 2025. The dominant ecosystem. | Used for UI, API, and component testing with strong CI/CD integration and mature reporting practices. | If you run Playwright or WebdriverIO without centralized reporting, you are behind many similar teams. |

Java | Still generates significant reporting volume, but its share is gradually shrinking. | Remains common for large regression suites and enterprise systems with long-lived test infrastructure. | If your project relies on static HTML reports or CI logs, you miss analytics many teams already use. |

Python | Smaller share, but usage remains stable over time. | Popular for API, data-driven testing, and fast feedback workflows. | If Pytest results exist only as CI artifacts, you lose long-term insight into failures and trends. |

.NET | Small but steady presence (NUnit, xUnit, related frameworks). | Typically used in long-running enterprise test suites requiring cross-project visibility. | If results stay in CI logs or local reports, you miss centralized insights into failures and trends. |

From insight to action

Framework distribution by reporting volume is not only a popularity ranking. It reflects real usage and real strategic direction.

Here is how teams use this data in practice.

1. Plan framework migrations before they become urgent

When a framework steadily loses reporting volume year over year, it rarely happens by accident.

Teams use this signal to:

Identify frameworks that are quietly becoming legacy

Audit critical test suites still running on them

Plan migrations while expertise and community support still exist

This turns migration discussions into concrete, evidence-based decisions: "This framework is down to 5% of our volume and has been shrinking for three years – let’s plan to move off it next year".

2. Align skills, hiring, and training with real usage

Year-by-year framework distribution also acts as a skills map for the organization.

Teams use it to:

Focus internal training on frameworks that are clearly gaining share

Prioritize hiring profiles aligned with dominant CI tooling

Invest in shared libraries, templates, and best practices where adoption is growing

Instead of relying on external trend reports, teams rely on their own data: "Our ReportPortal data shows this framework doubled its volume in two years – it should be a first-class skill in our team".

3. Justify investments and evaluate experiments

Multi-year reporting data connects tooling decisions with outcomes.

Teams use it to:

Evaluate whether framework pilots actually stuck

Correlate training or CI investments with real adoption

Identify high-cost frameworks that no longer justify ongoing support

In roadmap and budget discussions, this data replaces opinions with evidence.

Why this perspective matters

Many discussions about test frameworks stay theoretical. ReportPortal shows what teams actually connect, run, and maintain every day.

That means:

Less guesswork

Lower long-term risk

Faster time to value once reporting is integrated

Framework distribution by reporting volume turns execution data into strategic insight and helps teams evolve their testing stack deliberately, not accidentally.

By 2025, ReportPortal usage clearly shows a shift toward JavaScript-based frameworks, with Playwright leading by a wide margin.

Java remains stable, Python stays consistent, and .NET remains niche but dependable.

If your framework aligns with these trends, integration risk is low. If it doesn’t, this data gives you a realistic roadmap for what to adopt – and how to do it safely.